AI adoption isn't a one-time event. It's a journey, and most organizations are taking it in the wrong order.

The pattern is predictable: leadership gets excited, a tool gets purchased, a pilot gets launched, and then friction. Employees don't trust it. Data isn't ready. No one owns the outcomes. The pilot quietly fades, and the organization concludes that AI "just isn't there yet."

The real problem isn't the technology. It's the sequence.

Over the past three years, only 25% of AI initiatives have delivered their expected ROI. Not because AI doesn't work, but because most organizations skip the foundational work that makes it work. They treat adoption as a rollout when it's actually a transformation.

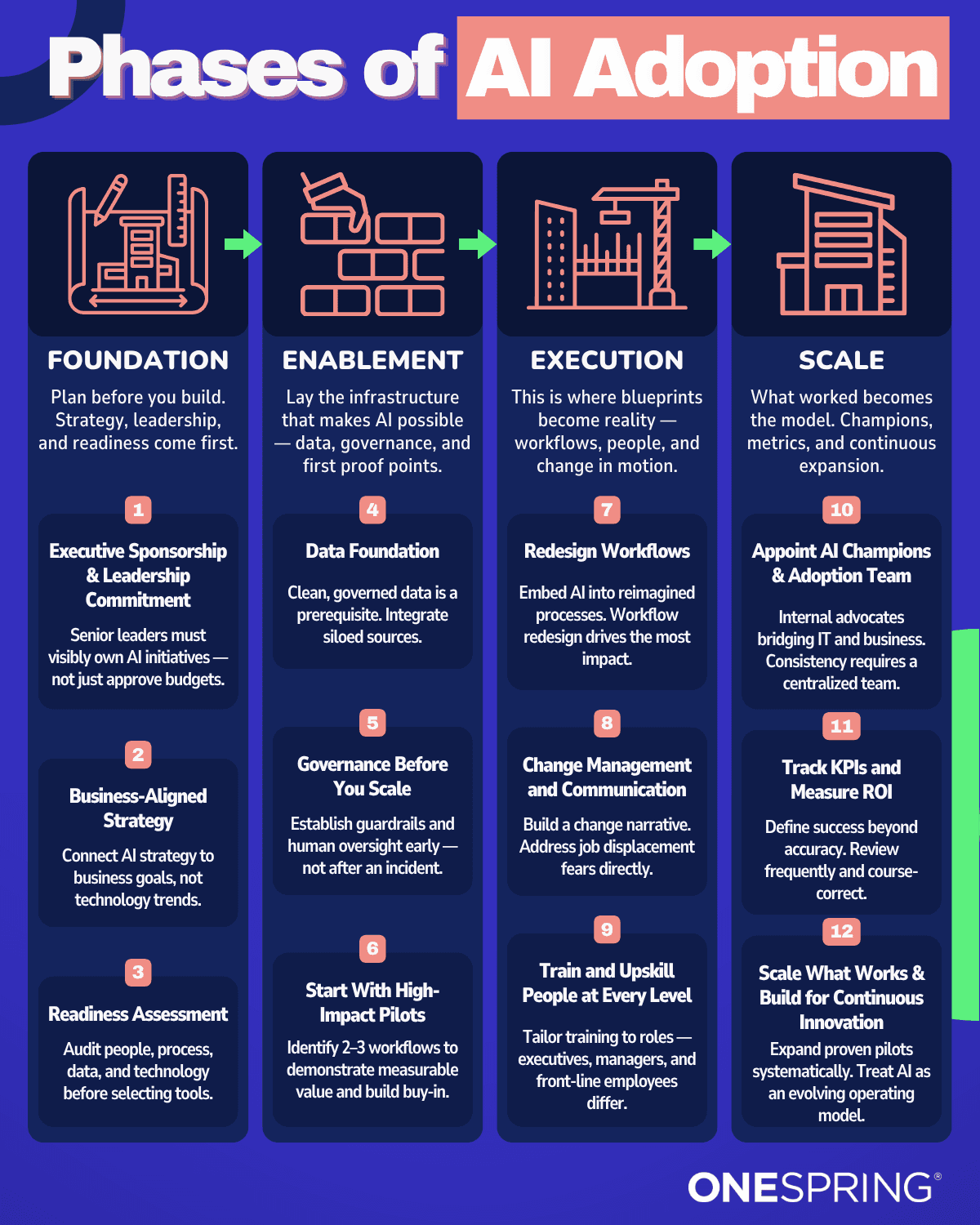

The organizations that get it right follow a deliberate progression across four phases: Foundation, Enablement, Execution, and Scale. Each phase builds on the last. Skip one, and the next phase becomes exponentially harder to sustain.

Phase 1: Foundation

Before a single tool is evaluated, three things need to be in place. Without them, everything downstream is built on sand.

Executive Sponsorship That Goes Beyond Budget Approval

Leaders must visibly own AI adoption. That means showing up in town halls, sponsoring pilot teams publicly, and making it clear that AI is a strategic priority, not an IT project. When executives delegate AI to a working group and disappear, the signal to the organization is that this is optional. Adoption stalls before it starts.

Sponsorship isn't just symbolic. It shapes how quickly cross-functional teams get resources, how conflicts between departments get resolved, and whether change management gets funded at all.

Business-Aligned Strategy

AI without a North Star drifts. Every organization needs to connect AI investment to specific business outcomes: reducing cycle time in a particular workflow, improving a customer-facing process, or accelerating a decision that currently takes too long.

The question isn't "where can we use AI?" It's "what outcomes matter most, and where does AI create a clear path to them?"

Readiness Assessment

This is the most skipped step and the most consequential. Before selecting any tools, organizations need an honest look at four dimensions:

People: Who will use AI, and what's their current comfort level?

Process: Which workflows are candidates, and how well-documented are they?

Data: Is the data clean, accessible, and governed?

Technology: What infrastructure exists, and where are the gaps?

A readiness assessment doesn't slow adoption down. It prevents the false starts that do.

Phase 2: Enablement

With a strategic foundation in place, the work shifts to building the conditions for AI to actually function. This phase is about infrastructure, guardrails, and early proof points, in that order.

Data Foundation

Clean, accessible, governed data is a prerequisite for AI. Not a nice-to-have. Not something to sort out later. AI models are only as reliable as the data they're built on, and organizations that skip this step often discover it the hard way: when a pilot produces results no one can trust or explain.

This doesn't require a multi-year data modernization effort before anything gets started. It requires knowing which data is needed for specific use cases, and ensuring that data is accurate, consistent, and accessible to the right people.

Governance Before You Scale

Governance sounds like a constraint. In practice, it's what makes scaling possible. Establishing clear policies around AI use — who can deploy what, how outputs get reviewed, and what's off-limits, protects the organization from incidents that erode trust and create regulatory exposure.

The organizations that build guardrails early move faster later. Those that skip governance spend months recovering from the incident that finally forced the conversation.

High-Impact Pilots

What Makes a Good Pilot | What to Avoid |

|---|---|

Targets a specific, measurable workflow | Broad "AI transformation" initiatives |

Has a defined owner and timeline | Pilots with no clear success criteria |

Demonstrates value in weeks, not quarters | Starting with the most complex use case |

Builds visible momentum across teams | Pilots hidden inside a single department |

Identify two or three workflows where AI can demonstrate measurable value quickly. Early wins aren't just proof of concept. They're the organizational trust-builders that unlock Phase 3.

Phase 3: Execution

This is where most organizations finally feel like they're "doing AI." It's also where the people-first principle matters most. Embedding AI into workflows without addressing the human side of that change is the fastest path to resistance, workarounds, and quiet abandonment.

Redesign Workflows — Don't Just Automate Them

The instinct is to take an existing process and add AI to it. That rarely produces meaningful results. The better question is: if AI were available from the start, how would we design this workflow differently?

Reimagining processes around AI capabilities — rather than retrofitting AI into legacy processes, is what separates organizations that see marginal efficiency gains from those that achieve structural improvements in how work gets done. AI-enabled workflows are expected to grow 8-fold by 2026, from 3% to 25% of all enterprise workflows. The organizations designing those workflows intentionally now will have a significant structural advantage.

Change Management Is Not Optional

Job displacement fears are real, and pretending they aren't accelerates the mistrust that kills adoption. The fastest way to derail an AI program is to build it on a foundation of ambiguity and silence.

Effective change management at this phase means:

Naming the fear directly: acknowledge that roles will change and be honest about what that means

Involving frontline employees early: people support what they help build

Communicating frequently: not just at launch, but throughout the entire transition

Framing AI as augmentation: most successful deployments make people more effective, not redundant

Train and Upskill Across Every Level

A single training session isn't a skill-building strategy. Executives, managers, and frontline employees each need different things. Executives need enough fluency to make good investment decisions. Managers need to understand how AI changes team workflows and performance expectations. Frontline employees need hands-on practice with the specific tools they'll use daily.

Training that treats all three groups the same produces none of the outcomes any of them need.

Phase 4: Scale

Scaling isn't about deploying AI everywhere at once. It's about expanding what's proven, sustaining momentum, and treating AI as an evolving operating model rather than a finished implementation.

Build an AI Champion Network

Internal advocates who bridge IT and the business are among the most underrated assets in an AI program. AI Champions aren't necessarily technical experts — they're credible voices within their departments who understand both the capabilities and the limitations of what's been deployed. They answer questions on the ground, surface friction before it becomes a problem, and keep adoption from reverting after the initial launch energy fades.

Track KPIs That Actually Reflect Adoption

Measuring AI success by model accuracy alone misses the point. The metrics that matter at scale include:

Adoption rate: what percentage of the intended users are actually using the tool?

Time saved: how much time per workflow has been recaptured, and where is it going?

Process quality: are error rates, decision speed, or output consistency improving?

Employee sentiment: do people feel more capable, or more anxious?

Accuracy is a technical metric. These are business metrics. Both matter, but only one tells you whether the program is working for the people it's supposed to serve.

Scale What Works — and Only What Works

The temptation at this phase is to expand AI broadly and quickly. Resist it. Expand the pilots that demonstrated clear, measurable value. Retire the ones that didn't. And treat the entire operating model as something that will continue to evolve — because AI capabilities, workforce skills, and business needs will all change faster than any static implementation plan can accommodate.

The organizations seeing the highest returns from AI aren't the ones who moved fastest. They're the ones who built the most deliberate foundation. Research shows that AI-first organizations, those with robust foundational capabilities and a transformational mindset, attribute more than half of their revenue growth and operating margin improvements to AI initiatives.

Image 1.0 - Four Phases of AI Adoption

The Through-Line: Trust Before Technology

Every phase of this framework comes back to the same principle: AI adoption succeeds or fails based on the human conditions surrounding the technology, not the technology itself.

Executive sponsorship is about trust in leadership. Readiness assessments are about respecting people's starting point. Change management is about honoring the anxiety that comes with real transformation. AI Champions are about sustaining trust at the ground level, long after the launch announcement.

The organizations that are winning with AI right now aren't necessarily the ones with the most sophisticated tools. They're the ones that took the time to build the right conditions — and had the discipline to follow the sequence.

Don't lead with technology. Lead with trust, clarity, and a sequence that actually holds.

If you're navigating AI adoption and want a clear-eyed assessment of where your organization stands, OneSpring's AI Readiness Mapping is designed to do exactly that — in weeks, not months.

Frequently Asked Questions

What are the four phases of AI adoption?

The four phases are Foundation (executive sponsorship, strategy, and readiness assessment), Enablement (data foundation, governance, and pilots), Execution (workflow redesign, change management, and training), and Scale (AI champions, KPI tracking, and expansion of proven use cases).

Why do most AI initiatives fail to deliver ROI?

Only 25% of AI initiatives deliver expected ROI because organizations skip foundational work—treating adoption as a technology rollout rather than a transformation. Most failures stem from lack of executive sponsorship, inadequate data governance, poor change management, or attempting to scale before proving value through pilots.

What is an AI readiness assessment?

An AI readiness assessment evaluates four dimensions before tool selection: People (user comfort and skills), Process (workflow documentation and candidates for AI), Data (quality, accessibility, and governance), and Technology (existing infrastructure and gaps). This assessment prevents false starts and ensures the organization is prepared for successful AI adoption.

Why is governance important before scaling AI?

Governance establishes clear policies around AI use—who can deploy what, how outputs are reviewed, and what's off-limits. Organizations that build guardrails early move faster later because they avoid incidents that erode trust and create regulatory exposure. Governance isn't a constraint; it's what makes scaling possible.

What makes a good AI pilot project?

Effective AI pilots target specific, measurable workflows with defined owners and timelines. They demonstrate value in weeks (not quarters) and build visible momentum across teams. Good pilots avoid broad "AI transformation" initiatives, unclear success criteria, overly complex starting use cases, and projects hidden within a single department.

How should organizations measure AI adoption success?

Success metrics should go beyond model accuracy to include adoption rate (percentage of intended users actually using the tool), time saved per workflow, process quality improvements (error rates, decision speed, output consistency), and employee sentiment. These business metrics reveal whether the AI program is working for the people it's supposed to serve.